Long post

Sorry. This is a long showcase.

It will cover:

- AI Vectors

- AI sentiment analysis

- AI text generation

Experience

I should start by saying that I didn’t know what retool was little over a year ago. I’m not a software developer, and I’ve never been a software developer. I run a sustainability agency. And I like making systems /processes that make our life easier. I have been helped largely by the Retool Community, ChatGPT and the occasional help from a developer found on UpWork.

Then with the new built-in features of interacting with (Open)AI – I wanted to try out an idea. Which we have been developing and playing with ever since.

Context

There’s a concept in sustainability reporting called Materiality. The idea that what is important to one company may not be important to another – and vice-versa. I.e. Health & safety might be material to an Oil company such as BP or Exxon, however not as material to an office based company – such as Retool or Cisco. However stress and workplace wellbeing might be, and subsequently might not be material to an oil company.. you get the gist.

Companies must (regulation dictates this now) perform a materiality assessment to determine what is material to them. This is what we do for companies. Based on the result usually dictates what they must report.

Traditionally, this involves a lot of research in its first phase – to get a long list of topics (which is shortlisted later by the companies stakeholders). But, as sustainability rises up the agenda of companies, and regulation is tightening (especially in Europe) – more companies must do this. So we have been developing an app to help us perform this research, with the help of Retool and AI - to make it quicker.

Solution

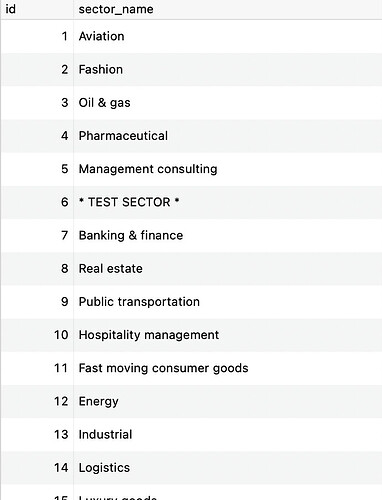

Our database (a postgres db hosted by AWS RDS) has a lot of our own data, which includes sectors (Oil & Gas, Aviation etc.) along with companies linked to these sectors (BP, Exxon, United Airlines, KLM etc).

Researching with AI

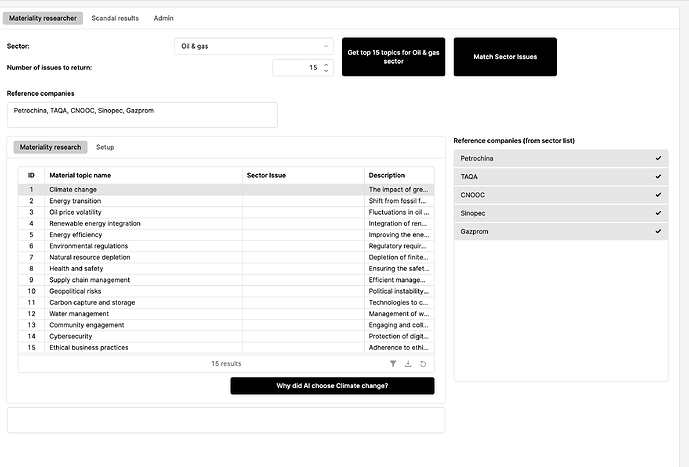

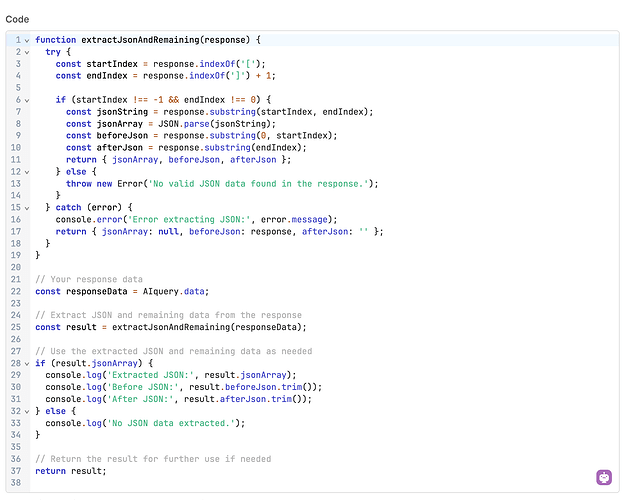

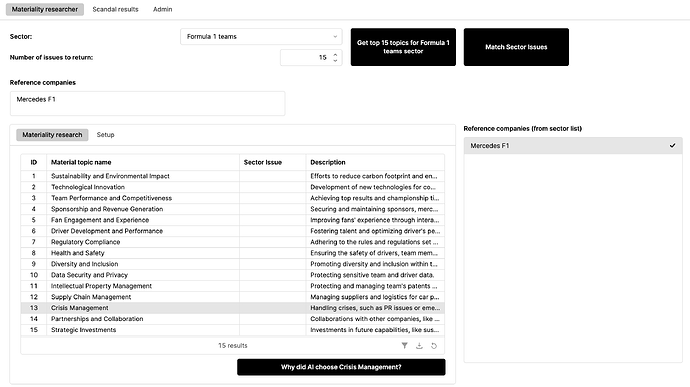

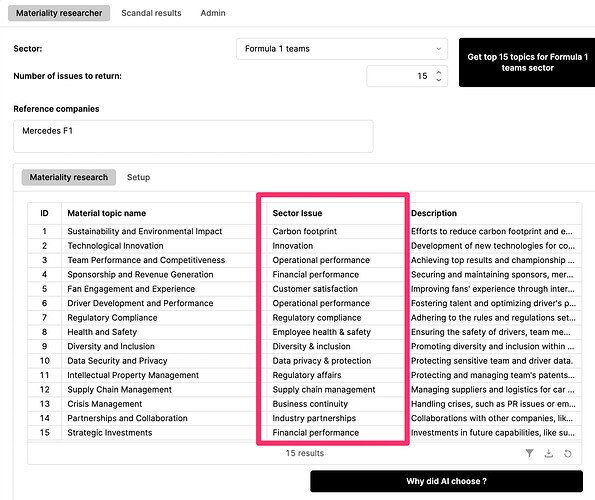

Using AI we were able to provide the sector name, some reference companies and some clever prompting to carry out the research. We then ask AI to return this in JSON – and we strip out anything else – and load into our table

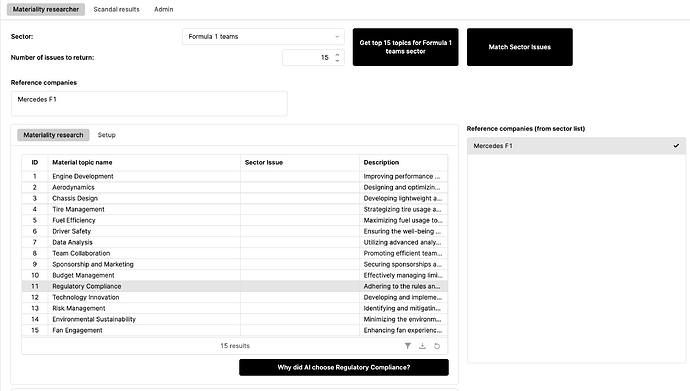

The results are decent however, sometimes not based on recent data. For example, if we search for the F1 teams sector and use ‘Mercedes F1’ as a reference company – we don’t get results necessarily based on recent events: I.e. the investigation into Red Bull team boss for alleged inappropriate behaviour – .

Before training AI using Retool vectors

So we get a list of topics – which is great, but it's not based on recent events.

So we realized that if we could somehow ensure our AI source had the most recent news, it might be more accurate. Because our AI LLM is not using up-to-the-minute sources.

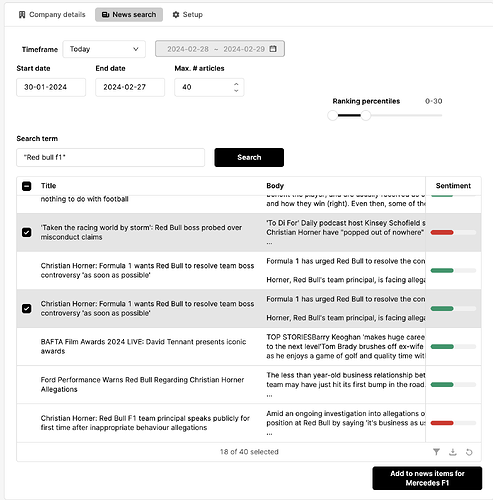

So – to get the most recent news, we built a small news app. This now means we can search news, and filter by country, language etc.

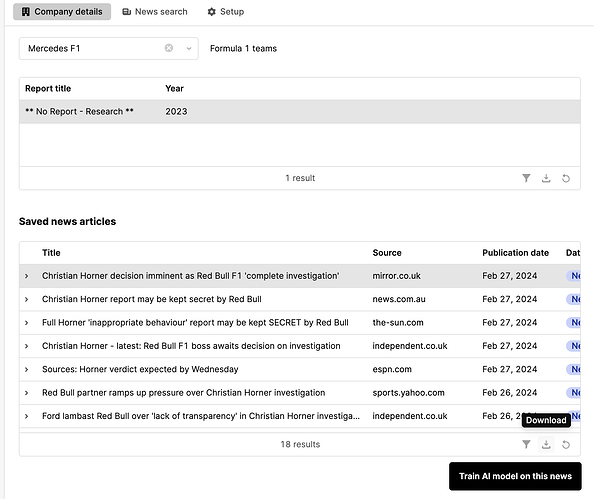

Once we have our list of news articles – we can then review these. Sometimes a headline review is enough, sometimes we will read a bit of the news and based on what we think, we then save these to our Company record.

This is useful in itself, when we come to write the companies sustainability report - but where it becomes really powerful is, we can commit this to our AI model (Our Retool Vector database). Which we were able to do (although not fully how we would like: Feature request @Retool – Dynamically create vectors with custom ID/names).

More informed research

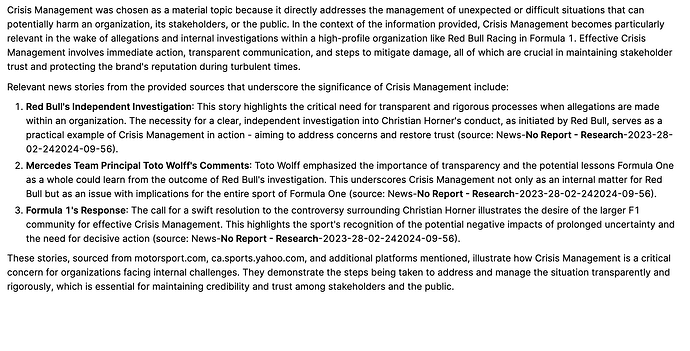

Now – when we run our materiality research (jump back to our Materiality bot app) – we are also able to reference the vector storage for that company (or sector) – and our results are a lot more accurate – using the most up to date news as a source.

After training AI using retool vectors (we added some recent news articles)

We also created an AI prompt to tell us why it picked a topic and for sources

AI sentiment comparison /analysis

As a final step – we are able to use the AI sentiment analysis to compare the issues found in the research and match with the sector topics in our data base.

Why is this important?

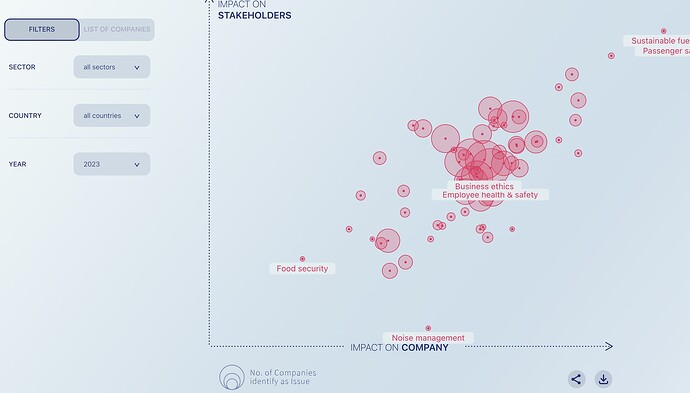

Well – because something we do (for fun at this point) – is combine all the material topics of all the companies and create a world view of materiality and material topics. Which we can then drill down by sector, year, country etc. In order to make this comparable, we need to categorize the companies material topics to sector topics. Which means we can use around 100 topics instead of 1000’s……..

This Materiality monitor is just a test project of ours – which was build by a developer in JS react.

What’s next

Materiality monitor

We’d love to use GPT Vision to and Retool to read the images of materiality matrices of companies (companies that we have not performed the analysis for, I should add) so we can increase our dataset to 1000s of companies instead of hundreds – as it’s very labour intensive doing it manually however – we have found that the results are not consistent or accurate enough. But if we can get to a point where they are, we can run a Retool workflow on 100s of images and populate our materiality monitor data-set.

Materiality research

On the materiality research bot – we’d like to develop and test his further with live news sources and then perhaps release as a client facing product however, we need a developer now to help – as my knowledge is really at its limits – and we still have lots of other exciting sustainability consulting to do!!