Hi @Aydan_McNulty,

Thanks for reaching out!

I took some inspiration from this post here to come up with a solution (it's a bit hacky though)

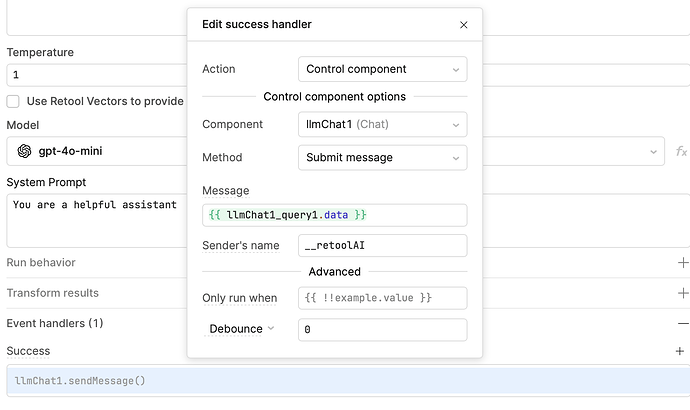

You could trigger a submit message event that posts the llm response as a chat from __retoolAI:

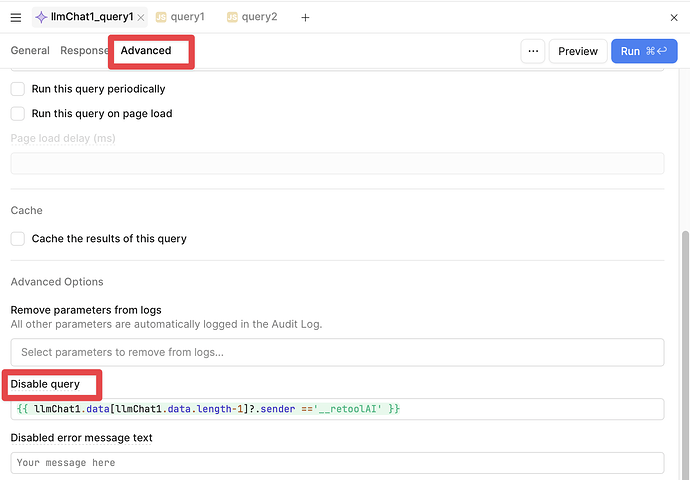

*Please note, simply adding this event caused an endless loop, so you'll want to add some logic to prevent this query from running if the latest message was from __retoolAI. Something like:

{{ llmChat1.data[llmChat1.data.length-1]?.sender =='__retoolAI' }}

There could be better ways to solve this, but hopefully this gives you some ideas ![]() I haven't done a ton of testing, so I'd recommend testing against different edge cases to make sure it works as you expect

I haven't done a ton of testing, so I'd recommend testing against different edge cases to make sure it works as you expect

Given your use case, you might be interested in checking out our new Agents product ![]() 🚀 Launch Day: Retool Agents is here

🚀 Launch Day: Retool Agents is here