Hello,

I'm succesfully running Retool Onprem now with an external Postgres database in Azure for the Retool settings and apps.

I've changed these settings in docker.env. I've used a connection string as the separate values didn't work, perhaps because there is no setting for SSL?

I can see in the env file that there are 2 blocks of settings for the postgres database, the top/first one is clearly Retools own settings db. The second perhaps the internal/storage database?

## Set and generate postgres credentials

# POSTGRES_DB=hammerhead_production

# POSTGRES_USER=retool_internal_user

# POSTGRES_HOST=postgres

# POSTGRES_PORT=5432

# POSTGRES_PASSWORD=cicYVqwpjhWZDYnZtPctEAEqKr2o3lY25vY

DATABASE_URL=postgres://xxxxxx:xxxxxxxx@xxxxxx.database.azure.com/retool?sslmode=require

## Set and generate retooldb postgres credentials

RETOOLDB_POSTGRES_DB=postgres

RETOOLDB_POSTGRES_USER=root

RETOOLDB_POSTGRES_HOST=retooldb-postgres

RETOOLDB_POSTGRES_PORT=5432

RETOOLDB_POSTGRES_PASSWORD=OYhuVQLvz8LduYidpW9WGRFwzgn3D/

Now I also want to have the internal/storage postgres database to be in Azure.

So what I've done so far:

- created a new database in Azure (same server as the Retool settings db) with same credentials

- Followed instructions from here and filled in the details for the new database. The connection test was succesful.

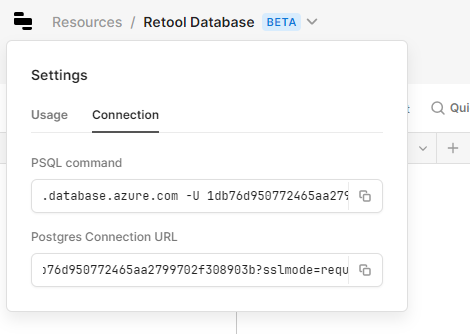

However, when going to the database in Retool and check the connection settings here:

I see that the "database" is just a random string. When looking at the azure postgres server I can also see that a two new databases have been created with random names, one of them matches the one in the connection string. Oddly I don't seem to have the priviliges to access those with pgadmin or heidisql.

I've tried again to add the database by filling out the form on {your-domain}/resources?setupRetoolDB=1 and again a 2 new database with random names were created.

Any idea what I'm doing wrong? And how I can move the database?

BTW, (how) can I disable the postgres containers in Docker-compose.yml once the database are both external? They don't need to spin up anymore.

thanks